Can ChatGPT Be Detected? Tools, Methods, Accuracy & Real-World Limits

Yes, text written with ChatGPT can sometimes be identified, but never with complete certainty.

There is a common belief that detection software can instantly tell whether something was written by a person or by a machine. In reality, no tool today provides a 100% guaranteed verdict. Every system works on probability, patterns, and statistical signals rather than proof.

In 2026, automated writing tools will be used across blogs, e-commerce stores, academic drafts, product descriptions, emails, and even legal summaries. Because of this widespread use, the ability to distinguish human vs machine writing has become important for publishers, teachers, recruiters, and search platforms. Yet the subject is more nuanced than many headlines suggest.

Detection is not a lie-detector. It is closer to handwriting analysis, it studies rhythm, structure, and predictability to estimate authorship. Understanding how this works, what tools exist, and where the limits lie helps content creators make smarter decisions instead of relying blindly on software scores.

What Does “Detecting ChatGPT Content” Mean?

Detecting ChatGPT content does not mean spotting a single word or phrase that proves machine origin. Instead, detection tools evaluate language behavior, the invisible patterns formed by sentence construction, vocabulary selection, and consistency.

Most systems attempt to answer questions such as:

- Are most sentences the same length without much variation?

- Are the same words or phrases repeated too often?

- Do the sentences connect too perfectly, almost like a template?

- Does the writing feel emotionless or missing a personal touch?

- Is the overall structure too predictable or formula-like?

The goal is not to “catch” someone but to estimate likelihood. The output is usually a percentage score or a confidence level rather than a definitive label. For example, a detector may say “78% likely machine-generated,” which still leaves room for doubt.

How AI Detection Tools Work

Detection platforms rely on large training datasets containing both human-written and machine-generated text. By comparing new content against these patterns, the system estimates authorship probability.

Below are the primary techniques used:

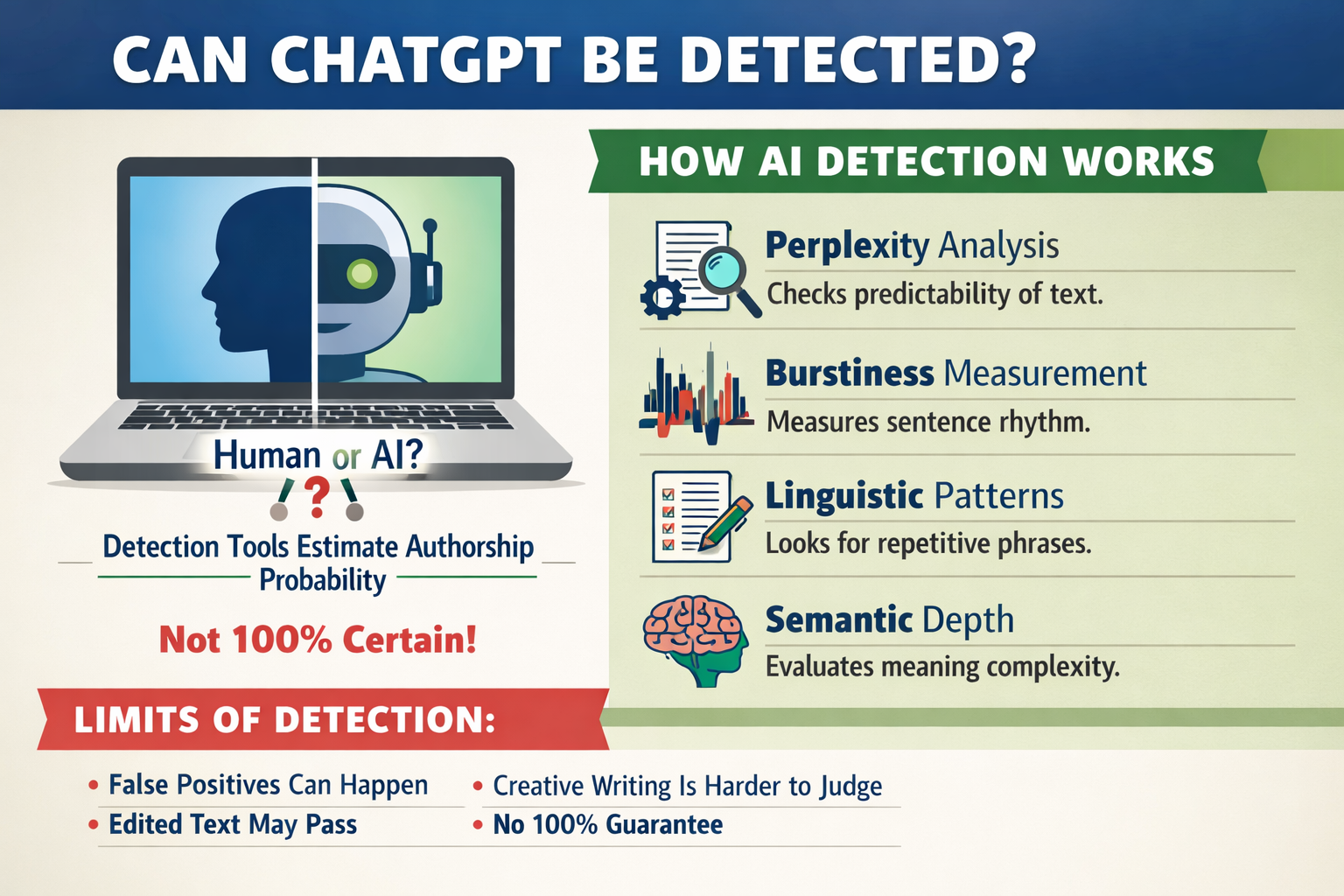

1. Perplexity Analysis

Perplexity measures predictability. Machine-written text often follows statistically probable word sequences, making it easier for a model to guess the next word. Human writing tends to include unexpected phrasing and variation, which raises perplexity.

2. Burstiness Measurement

Burstiness evaluates sentence rhythm. Humans naturally mix short and long sentences, fragments, and varied punctuation. Machine drafts may appear more evenly spaced. Detectors measure this rhythm to identify unnatural consistency.

3. Linguistic Pattern Recognition

Software evaluates grammar stability, repeated transitions, phrasing uniformity, and vocabulary recycling. Excessively polished or repetitive writing can trigger suspicion.

4. Semantic Depth Checks

Some tools analyze whether the content demonstrates layered reasoning or simply rephrases known structures. Shallow meaning density may suggest automated generation.

5. Behavioral and Metadata Signals

Advanced enterprise tools sometimes analyze editing history, typing speed, or revision timestamps when available. This is more common in academic or corporate environments rather than public blogging.

Popular ChatGPT Detection Tools

Several well-known platforms attempt to identify machine-generated text. Each tool approaches the problem slightly differently and is suited for different audiences.

1. HumanizeAI.com’s AI Detector

HumanizeAI’s Detector is designed to evaluate whether text appears naturally written or machine-generated while also helping improve readability and authenticity. Users can paste or upload content and receive a quick probability score within seconds. The tool focuses on analyzing structure, tone balance, and writing flow rather than only issuing a raw percentage.

A notable advantage is its emphasis on natural language refinement. Many bloggers, marketers, and website owners use it not just for detection but also to adjust drafts that feel overly mechanical. The platform highlights segments that may sound artificial and encourages rewriting with clearer expression and smoother rhythm. Privacy is also a key feature, as submitted text is not stored or reused. This dual focus on detection and improvement makes it practical for both content review and polishing before publication.

2. Grammarly Detector

Grammarly includes a detection feature within its broader writing assistant. It flags sections that resemble automated phrasing while simultaneously offering grammar and clarity improvements. Its convenience makes it popular among everyday writers, though it is not designed for deep forensic analysis.

3. Originality.ai

Publishers, agencies, and SEO teams frequently use Originality.ai. It combines plagiarism scanning with authorship probability scoring. Reports are detailed and suitable for professional environments, but like all tools, the results still require human interpretation.

4. GPTZero

GPTZero gained attention in academic communities. It relies heavily on perplexity and burstiness calculations to estimate origin. It can be effective for essays and structured reports but may incorrectly flag formal human writing.

5. Copyleaks

Copyleaks offers both plagiarism checks and machine-authorship analysis. Educational institutions and enterprises often prefer it because of integration options and multi-layer reporting systems.

Accuracy of AI Detectors – Are They Reliable?

Reliability varies widely depending on writing style, editing level, and subject matter. No system guarantees flawless precision.

- False Positives: Human-written content may be flagged as machine-generated, especially if the tone is formal, technical, or highly structured. Academic papers and instruction manuals often fall into this category.

- False Negatives: Edited machine drafts or hybrid content can pass detection. When writers inject personal experiences, restructure paragraphs, and vary sentence rhythm, tools may struggle to classify origin accurately.

- Context Sensitivity: Creative storytelling, humor, and conversational posts are harder to judge because their unpredictability overlaps with human patterns. Technical documentation is easier but still not certain.

In practical terms, detection tools provide guidance, not proof. Their output should be considered alongside manual review and contextual understanding.

Limitations of ChatGPT Detection

Despite improvements, detection systems face ongoing challenges:

- Rapid Model Evolution: Writing systems improve quickly, making older detection patterns outdated.

- Editing Flexibility: Even minor manual edits can drastically change probability scores.

- Language Diversity: Mixed languages and regional dialects reduce accuracy.

- Creative Variation: Poetry, humor, and storytelling blur statistical lines.

- Score Misinterpretation: Numbers can be misleading when taken as absolute truth.

These limits show why automated detection should never be treated as a courtroom verdict.

Can ChatGPT Avoid Detection?

Avoidance is not always about deception. Many writers simply want their drafts to sound natural and engaging. Detection becomes more difficult when:

- Personal anecdotes or real-life experiences are added

- Sentence lengths vary naturally

- Opinions and emotional tone are introduced

- Manual editing reshapes structure and rhythm

- Multiple writing styles are blended

The goal should not be to “trick” software but to produce clear, valuable, and original content that genuinely serves readers.

Risks of Relying Only on Detection Tools

Excessive dependence on automated systems can create unintended consequences:

- Misjudging Authentic Work: Skilled writers may be wrongly flagged.

- Erosion of Trust: Over-policing discourages creativity and openness.

- Legal and Ethical Concerns: Decisions based purely on software scores can lead to disputes.

- Quality Neglect: Detection focuses on origin, not usefulness or depth.

- Human review remains essential. Technology should assist judgment, not replace it.

Best Practices for Using Writing Tools Responsibly

Responsible usage is not about hiding the origin of content — it is about maintaining quality, authenticity, and trust. Writing tools are most effective when they support human creativity rather than replace it. The goal should always be to produce content that is helpful, accurate, and genuinely valuable to readers.

1. Blend Automation with Personal Insight

Writing assistants are excellent for generating ideas, outlines, and rough drafts, but the final message should always carry a human voice. Personal experience, industry exposure, and subject-matter understanding add layers that automated drafts cannot fully replicate. When a writer reviews and reshapes the content using their own judgment, the result becomes more nuanced, more credible, and more engaging. This balance ensures efficiency without sacrificing authenticity.

2. Add a Unique Perspective

Original thought is the strongest indicator of genuine writing. Including case studies, real examples, customer stories, or personal lessons transforms generic information into meaningful content. A paragraph that explains what happened, why it mattered, and what was learned naturally feels human because it reflects lived experience rather than surface-level summaries. Unique perspectives also increase reader trust and improve long-term content value.

3. Edit Thoroughly and Intentionally

Editing is where raw drafts become polished writing. Instead of only correcting grammar, writers should review sentence rhythm, paragraph flow, and clarity of ideas. Repetitive phrasing, overly formal expressions, or predictable transitions should be replaced with natural variations. Reading the text aloud, restructuring sentences, and simplifying complex wording often reveal areas that feel mechanical. Intentional editing improves both readability and credibility.

4. Focus on Value Rather Than Origin

Readers rarely care whether a draft began as a suggestion or a blank page. What matters is whether the information is useful, accurate, and easy to understand. Content that solves problems, answers real questions, or offers practical insights will always outperform content created merely to fill space. When value becomes the priority, concerns about authorship naturally become less significant.

5. Be Transparent When Required

In educational, corporate, or research environments, transparency can prevent confusion and build trust. Disclosure does not weaken credibility; in many cases, it strengthens it by showing honesty and ethical awareness. Clear communication about how tools were used, whether for outlining, proofreading, or brainstorming, demonstrates professionalism and accountability rather than dependency.

Future of Detection Technology

Detection systems are evolving at the same pace as writing technologies. As language models grow more advanced, detection methods also become more sophisticated. However, the relationship between the two is dynamic rather than final, every improvement in one area prompts innovation in the other.

1. Behavioral Authorship Tracking

Future systems may analyze typing speed, editing habits, and revision history to understand how a document was created. This moves detection beyond text patterns into behavioral analysis, offering deeper context rather than surface evaluation.

2. Deeper Semantic Fingerprinting

Instead of only studying sentence structure, emerging tools may evaluate meaning depth, logical connections, and conceptual layering. This approach attempts to understand how ideas are formed, not just how words are arranged.

3. Cross-Platform Identity Analysis

Some advanced systems may compare writing styles across multiple platforms or documents to establish consistency in authorship. This technique could be useful in academic or enterprise environments where identity verification matters.

4. Multilingual Accuracy Improvements

As global content grows, detection systems are expanding beyond English. Improved multilingual models will aim to better understand regional grammar, dialectal variations, and cultural phrasing.

5. Hybrid Collaboration Indicators

Future tools may identify whether a piece of writing is entirely human, entirely automated, or collaboratively created. This recognizes the growing reality that many modern documents are produced through mixed workflows rather than single sources.

Conclusion

ChatGPT detection is possible but never perfectly reliable. Tools analyze perplexity, burstiness, and linguistic patterns to estimate authorship probability, yet false positives and negatives remain unavoidable. Editing flexibility and rapid model evolution make the landscape constantly shifting.

Rather than viewing detection as a battle, the smarter approach is responsible usage and thoughtful refinement. High-quality writing, originality, and reader value ultimately matter more than technical classification. Detection tools should serve as supportive aids, not final authorities.

Frequently Asked Questions

1. Can ChatGPT content ever be 100% detected?

No. Detection systems work on probability and pattern analysis, not definitive proof.

2. Which detector is most accurate?

Accuracy depends on context and writing style. Tools like HumanizeAI, Originality.ai, GPTZero, and Copyleaks are widely used, but none are flawless.

3. Is AI detection legal?

Yes, using detection software is legal. However, making serious decisions based solely on automated scores can raise ethical or contractual concerns.

4. Do search engines penalize machine-written content?

Search platforms generally prioritize usefulness and quality rather than origin. Search engines are more likely to penalize low-value or spam content than well-written material.

5. How can writers stay safe using writing tools?

Edit thoroughly, add personal insight, maintain originality, and prioritize reader value over mechanical output.